Faculty of Science – Informatics Institute

-

Publicatiedatum 18 juni 2015

-

Opleidingsniveau Universitair

-

Salarisindicatie €2,125 to €4,551 gross per month

-

Sluitingsdatum 31 augustus 2015

-

Functieomvang 38 hours per week

-

Vacaturenummer 15-233

The Faculty of Science holds a leading position internationally in its fields of research and participates in a large number of cooperative programs with universities, research institutes and businesses. The faculty has a student body of around 4,000 and 1,500 members of staff, spread over eight research institutes and a number of faculty-wide support services. A considerable part of the research is made possible by external funding from Dutch and international organizations and the private sector. The Faculty of Science offers thirteen Bachelor's degree programs and eighteen Master’s degree programs in the fields of the exact sciences, computer science and information studies, and life and earth sciences.

Since September 2010, the whole faculty has been housed in a brand new building at the Science Park in Amsterdam. The installment of the faculty has made the Science Park one of the largest centers of academic research in the Netherlands.

The Informatics Institute is one of the large research institutes with the faculty, with a focus on complex information systems divided in two broad themes: 'Computational Systems' and 'Intelligent Systems.' The institute has a prominent international standing and is active in a dynamic scientific area, with a strong innovative character and an extensive portfolio of externally funded projects.

Project description

This summer Qualcomm, the world-leader in mobile chip-design, and the University of Amsterdam, a world-leading computer science department, have started a joint research lab in Amsterdam, the Netherlands, as a great opportunity to join the best of academic and industrial research. Leading the lab are profs. Max Welling (machine learning), Arnold Smeulders (computer vision analysis), and Cees Snoek (image categorization).

The lab will pursue world-class research on the following eleven topics:

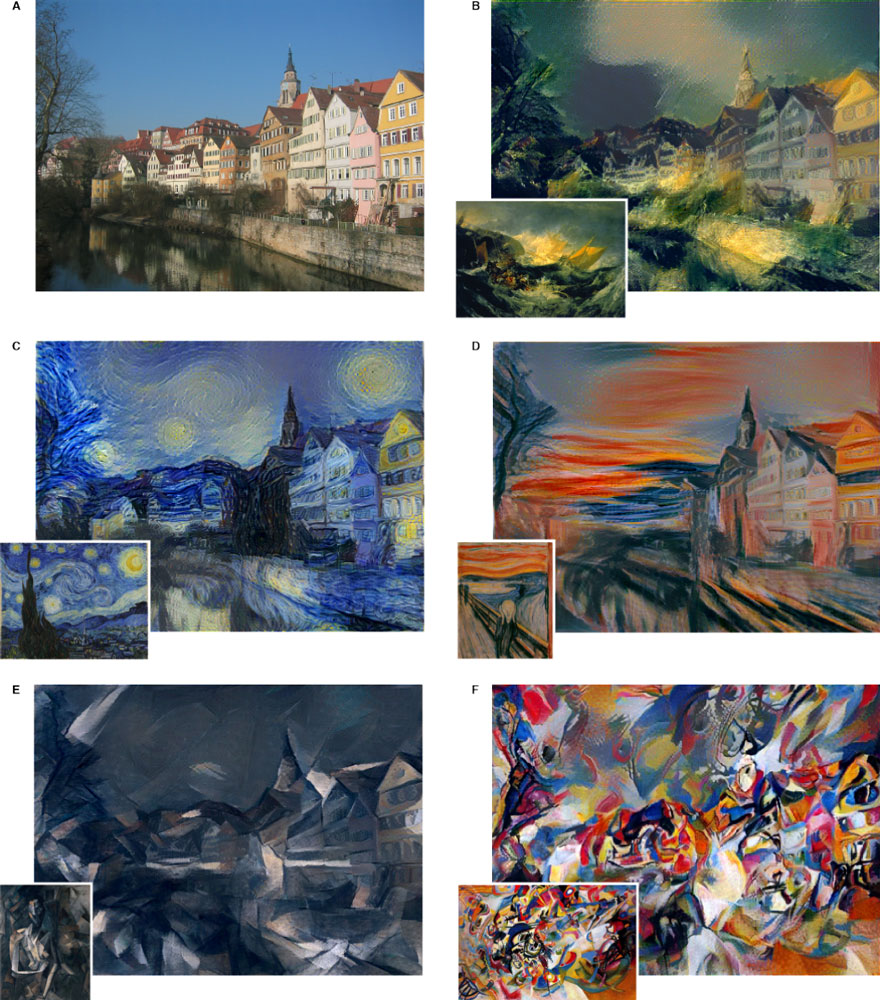

Project 1 CS: Spatiotemporal representations for action recognition. Automatically recognize actions in video, preferablywhich action appears when and where as captured by a mobile phone, and learned from example videos and without example videos.

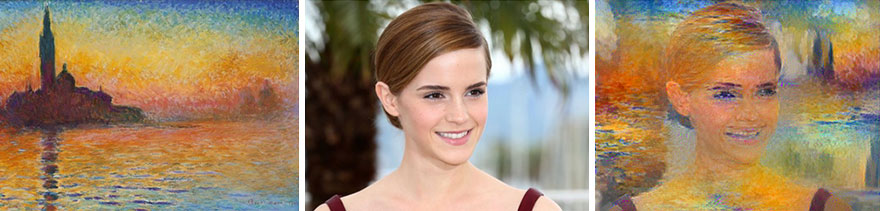

Project 2 CS: Fine-grained object recognition. Automatically recognize fine-grained categories with interactive accuracy by using very deep convolutional representations computed from automatically segmented objects and automatically selected features.

Project 3 CS: Personal event detection and recounting.Automatically detect events in a set of videos with interactive accuracy for the purpose of personal video retrieval and summarization. We strive for a generic representation that covers detection, segmentation, and recounting simultaneously, learned from few examples.

Project 4 CS: Counting. The goal of this project is to accurately count the number of arbitrary objects in an image and video independent of their apparent size, their partial presence, and other practical distractors. For use cases as in Internet of Things or robotics.

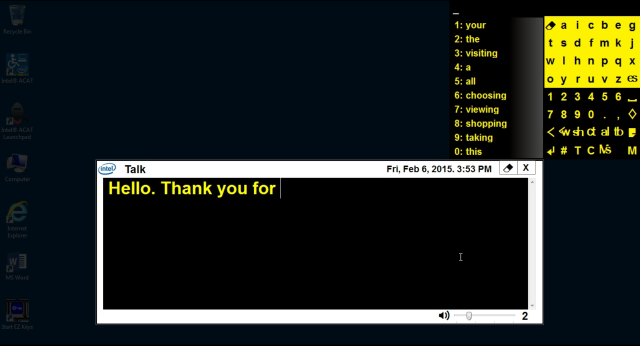

Project 5 AS: One shot visual instance search. Often when searching for something, a user will have available just 1 or very few images of the instance of search with varying degrees of background knowledge.

Project 6 AS: Robust Mobile Tracking. In an experimental view of tracking, the objective is to track the target’s position over time given a starting box in frame 1 or alternatively its typed category especially for long-term, robust tracking.

Project 7 AS: The story of this. Often when telling a story one is not interested in what happens in general in the video, but what happens to this instance (a person, a car to pursue, a boat participating in a race). The goal is to infer what the target encounters and describe the events that occur it.

Project 8 AS: Statistical machine translation. The objective of this work package is to automatically generate grammatical descriptions of images that represent the meaning of a single image, based on the annotations resulting from the above projects.

Project 9 MW: Distributed deep learning. Future applications of deep learning will run on mobile devices and use data from distributed sources. In this project we will develop new efficient distributed deep learning algorithms to improve the efficiency of learning and to exploit distributed data sources.

Project 10 MW: Automated Hyper-parameter Optimization. Deep neural networks have a very large number of hyper-parameters. In this project we develop new methods to automatically and efficiency determine these hyperparameters from data for deep neural networks.

Project 11 MW: Privacy Preserving Deep Learning. Training deep neural networks from distributed data sources must take privacy considerations into account. In this project we will develop new distributed and privacy preserving learning algorithms for deep neural networks.

Requirements

PhD candidates

-

Master degree in Artificial Intelligence, Computer Science, Physics or related field;

-

excellent programming skills (the project is in Matlab, Python and C/C++);

-

solid mathematics foundations, especially statistics and linear algebra;

-

highly motivated;

-

fluent in English, both written and spoken;

-

proven experience with computer vision and/or machine learning is a big plus.

Postdoctoral researchers

-

PhD degree in computer vision and/or machine learning;

-

excellent publication record in top-tier international conferences and journals;

-

strong programming skills (the project is in Matlab, Python and C/C++);

-

motivated and capable to coordinate and supervise research.

Further information

Informal inquiries on the positions can be sent by email to:

Appointment

Starting date: before Fall 2015.

The appointment for the PhD candidates will be on a temporary basis for a period of 4 years (initial appointment will be for a period of 18 months and after satisfactory evaluation it can be extended for a total duration of 4 years) and should lead to a dissertation (PhD thesis). An educational plan will be drafted that includes attendance of courses and (international) meetings. The PhD student is also expected to assist in teaching of undergraduates.

Based on a full-time appointment (38 hours per week) the gross monthly salary will range from €2,125 in the first year to €2,717 in the last year. There are also secondary benefits, such as 8% holiday allowance per year and the end of year allowance of 8.3%. The Collective Labour Agreement (CAO) for Dutch Universities is applicable.

The appointment of the postdoctoral research fellows will be full-time (38 hours a week) for two years (initial employment is 12 months and after a positive evaluation, the appointment will be extended further with 12 months). The gross monthly salary will be in accordance with the University regulations for academic personnel, and will range from €2.476 up to a maximum of €4.551 (scale 10/11) based on a full-time appointment depending on qualifications, expertise and on the number of years of professional experience. The Collective Labour Agreement for Dutch Universities is applicable. There are also secondary benefits, such as 8% holiday allowance per year and the end of year allowance of 8.3%.

Some of the things we have to offer:

-

competitive pay and good benefits;

-

top-50 University worldwide;

-

interactive, open-minded and a very international city;

-

excellent computing facilities.

English is the working language in the Informatics Institute. As in Amsterdam almost everybody speaks and understands English, candidates need not be afraid of the language barrier.

Job application

Applications may only be submitted by sending your application to application-science@uva.nl. To process your application immediately, please quote the vacancy number 15-233 and the position and the project you are applying for in the subject-line. Applications must include a motivation letter explaining why you are the right candidate, curriculum vitae, (max 2 pages), a copy of your Master’s thesis or PhD thesis (when available), a complete record of Bachelor and Master courses (including grades), a list of projects you have worked on (with brief descriptions of your contributions, max 2 pages) and the names and contact addresses of two academic references. Also indicate a top-3 of projects you would like to work on and why. All these should be grouped in one PDF attachment.

http://www.uva.nl/over-de-uva/werken-bij-de-uva/vacatures/item/15-233.html?f=Qual

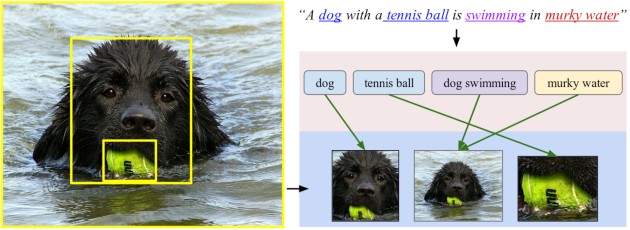

India’s online marketplace Flipkart has started rolling out image search on its mobile app to improve the shopping experience.

India’s online marketplace Flipkart has started rolling out image search on its mobile app to improve the shopping experience.