Article From http://papersincomputerscience.org/2009/03/26/color-indexing/

Citation: Swain, M. J. and Ballard, D. H. Color indexing. International Journal of Computer Vision 7, 1 (Nov. 1991), 11-32. (PDF)

Abstract: Computer vision is embracing a new research focus in which the aim is to develop vision skills for robots that allow them to interact with a dynamic, realistic environment. To achieve this aim, new kinds of vision algorithms need to be developed which run in real time and subserve the robot’s goals. Two fundamental goals are [identifying an object at a known location and] determining the location of a known object. Color can be successfully used for both tasks.

This article demonstrates that color histograms of multicolored objects provide a robust, efficient cue for indexing into a large database of models. It shows that color histograms are stable object representations in the presence of occlusion and over change in view, and that they can differentiate among a large number of objects. For solving the identification problem, it introduces a technique called Histogram Intersection, which matches model and image histograms and a fast incremental version of Histogram Intersection, which allows real-time indexing into a large database of stored models. For solving the location problem it introduces an algorithm called Histogram Backprojection, which performs this task efficiently in crowded scenes.

Discussion: This 1991 computer vision paper introduced the concept of identifying and finding objects in images using their color histograms.

In 1991, as computer vision systems were moving away from offline processing of static photographs and into real-time use by mobile robots with inexpensive cameras attached, there was a pressing need for efficient algorithms for doing simple vision tasks like identifying, finding, and tracking objects. Until the publication of this paper, most of these techniques were based on the most obvious attribute, shape recognition, but this was both computationally expensive and fragile: the slightest rotation or occlusion of an object (stuff going in front of it) could radically alter its perceived shape. Color-based recognition is more robust: the colors of an object don’t change very much as it moves, rotates, or becomes occluded by other objects. It can work with extremely low-resolution images (in one experiment, the authors got acceptable performance on 8 × 5 pixel images!). On the other hand, it’s very sensitive to the color and intensity of lighting, and the image has to be normalized to account for this. It also, obviously, has difficulty distinguishing objects that have similar colors.

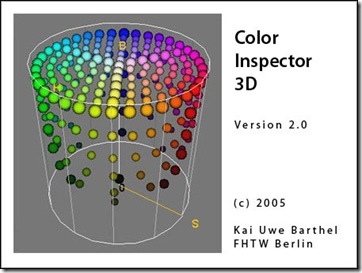

The details of the system are simple: divide the space of all colors up into fairly large “buckets” - typical would be 16 buckets along each color axis (e.g. red, green, blue). For example, the RGB color (170, 24, 255) might get bucketed in the bucket (10, 1, 15). Then, for each object you want to recognize or find, take a model photo of it and count the number of pixels falling into each bucket; this is called the color histogram. To compare two color histograms, they use the Histogram Intersection operator: this takes the minimum of the two counts (from each image) for each bucket, and then adds them up. This value is normalized by dividing it by the number of pixels in the source image. Images with very similar histograms will have intersection values close to 1, whereas ones with different histograms will have much smaller values. By comparing an image to be identified against each image in a database of models, each with a precomputed color histogram, this can rapidly locate the desired image.

Locating objects is very similar: each pixel is assigned a value based on its bucket and how common that bucket is in the model image of the object being sought. Then it looks for a region containing many large values. This is facilitated by using a convolution with a circle - effectively “blurring” the image and mixing nearby values - followed by location of the pixel with the largest value.

The approach of the paper is actually more general than it sounds: virtually any image feature that you can construct a histogram of, can be applied to the same tasks using the same approach. This includes features such as local geometry, local texture, rough estimated size, and so on. The sensitivity of the algorithm to any particular feature can be tuned by adjusting the number of bucket divisions along that dimension. For example, Niblack et al’s QBIC system (1993), still in use today by IBM in DB2, uses color, texture, and shape simultaneously. In 1999 a combination of features using this approach was applied effectively to content-based image retrieval in Tao and Grosky’s “Spatial Color Indexing: A Novel Approach for Content-Based Image Retrieval” (at Citeseer).

Unsurprisingly, the paper demonstrated that the performance of color indexing is severely degraded by changes in lighting; this is one reason that the work did not appear until 1991, after work on color correction and normalization had appeared that can be used in a preprocessing step to effectively cope with these issues. This original paper only examined differences in brightness, and performed a trivial normalization of brightness values to demonstrate its advantage. Dealing with illumination invariant color matching - particularly when the light changed in color - would be the subject of several later publications such as Matas et al’s “Color-Based Object Recognition under Spectrally Nonuniform Illumination” and “On Representation and Matching of Multi-Coloured Objects” (1995).

The color indexing paper shows its age when it comes to scale: concerned only with robotics applications, they considered 66 objects to be a “large database.” Today, object identification, classification, and location is studied primarily in the context of image retrieval and image filtering, where there are frequently millions of images to consider with thousands of objects and with no control over image conditions. The “incremental” histogram intersection optimization presented in this paper - essentially, only looking at a few of the buckets with the largest counts - enables it to scale to moderate-sized databases, but not anything as large as modern applications require. Since then, more scalable approaches to color indexing databases have been developed, such as Albuz et al’s “Scalable Color Image Indexing and Retrieval Using Vector Wavelets” (2001) and hierarchical clustering based approaches such as those of Abdel-Mottaleb et al (”Performance Evaluation of Clustering Algorithms for Scalable Image Retrieval”, 1998).

The paper employs combinatorial logic, and a bit of guesswork, to argue that the number of distinct color profiles is sufficiently large to allow a very large number of potential objects to be distinguished. In Stricker and Swain’s “The Capacity of Color Histogram Indexing” (1994, Citeseer) an interesting connection between color indexing and coding theory provides a bound on the actual number of distinguishable images in a color indexing database, which they call its capacity.

However, objects are not necessarily well-distributed among these in practice; in the original experiments the test objects were relatively easy to identify and distinguish and have clear color histograms, such as household items with illustrated packaging. Humans regularly distinguish objects with nearly identical color histograms, such as different types of trees or different brands of cars of the same color; and also regularly identify objects with very different color histograms as being the same, such as a person wearing two different sets of clothes. Color indexing has not, as far as I am aware, been extended to such difficult identification problems.

A peculiar property of color indexing is that, unlike many other object identification and location methods, it has no clear analogy in human visual processing - indeed, the paper cites work by cognitive psychology researcher Anne Treisman showing that humans have demonstrated poor performance at locating objects based on their colors. I’m not aware of any new psychology research investigating the role of color histograms in object location and attention in humans.

Keith Price maintains a bibliography of papers related to recognition by color indexing.

dcoetzee